Google Launches AI Demos Including “Talk to Books”, Browse Passages From Books Using Natural Language and Experimental AI

Natural language understanding has evolved substantially in the past few years, in part due to the development of word vectors that enable algorithms to learn about the relationships between words, based on examples of actual language usage. These vector models map semantically similar phrases to nearby points based on equivalence, similarity or relatedness of ideas and language.

Last year, we used hierarchical vector models of language to make improvements to Smart Reply for Gmail. More recently, we’ve been exploring other applications of these methods.

Today, we are proud to share Semantic Experiences, a website showing two examples of how these new capabilities can drive applications that weren’t possible before.

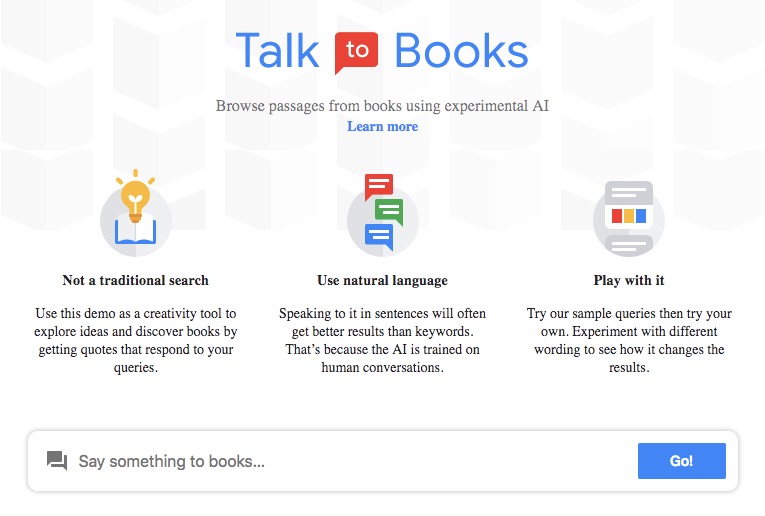

Talk to Books is an entirely new way to explore books by starting at the sentence level, rather than the author or topic level.

From the TTB Web site:

In Talk to Books, when you type in a question or a statement, the model looks at every sentence in over 100,000 books to find the responses that would most likely come next in a conversation. The response sentence is shown in bold, along with some of the text that appeared next to the sentence for context.

Mastering Talk to Books may take some experimentation. Although it has a search box, its objectives and underlying technology are fundamentally different than those of a more traditional search experience. It’s simply a demonstration of research that enables an AI to find statements that look like probable responses to your input rather than a finely polished tool that would take into account the wide range of standard quality signals. You may need to play around with it to get the most out of it.

Semantris is a word association game powered by machine learning, where you type out words associated with a given prompt.

Much More in the Full Text Google Research Post

Filed under: News

About Gary Price

Gary Price (gprice@gmail.com) is a librarian, writer, consultant, and frequent conference speaker based in the Washington D.C. metro area. He earned his MLIS degree from Wayne State University in Detroit. Price has won several awards including the SLA Innovations in Technology Award and Alumnus of the Year from the Wayne St. University Library and Information Science Program. From 2006-2009 he was Director of Online Information Services at Ask.com.