Facebook Publishes New Community Standards Enforcement Report

From Facebook:

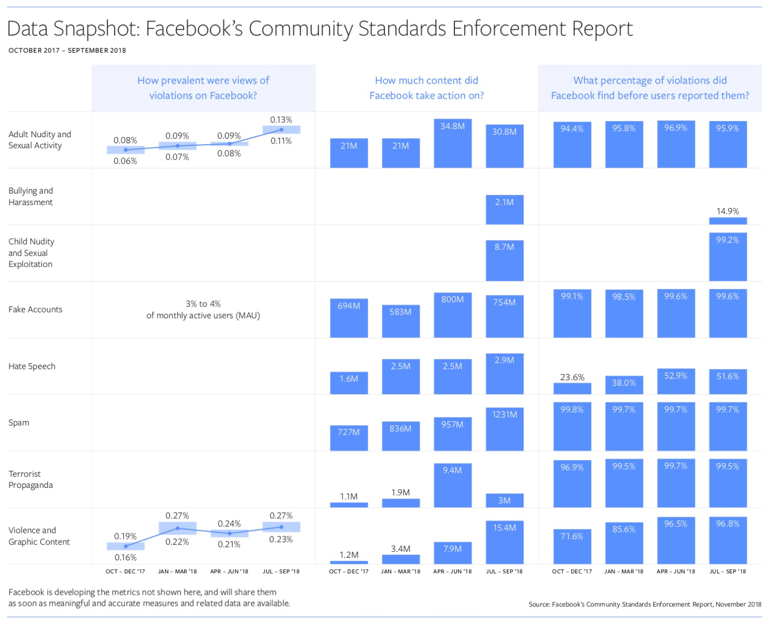

Today, we’re publishing our second Community Standards Enforcement Report. This second report shows our enforcement efforts on our policies against adult nudity and sexual activity, fake accounts, hate speech, spam, terrorist propaganda, and violence and graphic content, for the six months from April 2018 to September 2018. The report also includes two new categories of data — bullying and harassment, and child nudity and sexual exploitation of children.

[Clip]

For the two new categories we’ve added to this report — bullying and harassment and child nudity and sexual exploitation of children — the data will serve as a starting point so we can measure our progress on these violations over time as well.

Bullying and harassment tend to be personal and context-specific, so in many instances we need a person to report this behavior to us before we can identify or remove it. This results in a lower proactive detection rate than other types of violations. In the last quarter, we took action on 2.1 million pieces of content that violated our policies for bullying and harassment — removing 15% of it before it was reported. We proactively discovered this content while searching for other types of violations. The fact that victims typically have to report this content before we can take action can be upsetting for them. We are determined to improve our understanding of these types of abuses so we can get better at proactively detecting them.

Resources

Filed under: Data Files, News, Publishing

About Gary Price

Gary Price (gprice@gmail.com) is a librarian, writer, consultant, and frequent conference speaker based in the Washington D.C. metro area. He earned his MLIS degree from Wayne State University in Detroit. Price has won several awards including the SLA Innovations in Technology Award and Alumnus of the Year from the Wayne St. University Library and Information Science Program. From 2006-2009 he was Director of Online Information Services at Ask.com.